Integrated Development Environment (IDE)

An integrated development environment is an application which provides programmers and developers with basic tools to write and test software. In general, an IDE consists of an editor, a compiler (or interpreter), and a debugger which can be accessed through a graphic user interface (GUI).

According to Wikipedia, “Python is a widely used high-level, general-purpose, interpreted, dynamic programming language.” Python is a fairly old and a very popular language. It is open source and is used for web and Internet development (with frameworks such as Django, Flask, etc.), scientific and numeric computing (with the help of libraries such as NumPy, SciPy, etc.), software development, and much more.

Text editors are not enough for building large systems which require integrating modules and libraries and a good IDE is required.

Here is a list of some Python IDEs with their features to help you decide a suitable IDE for your machine learning problem.

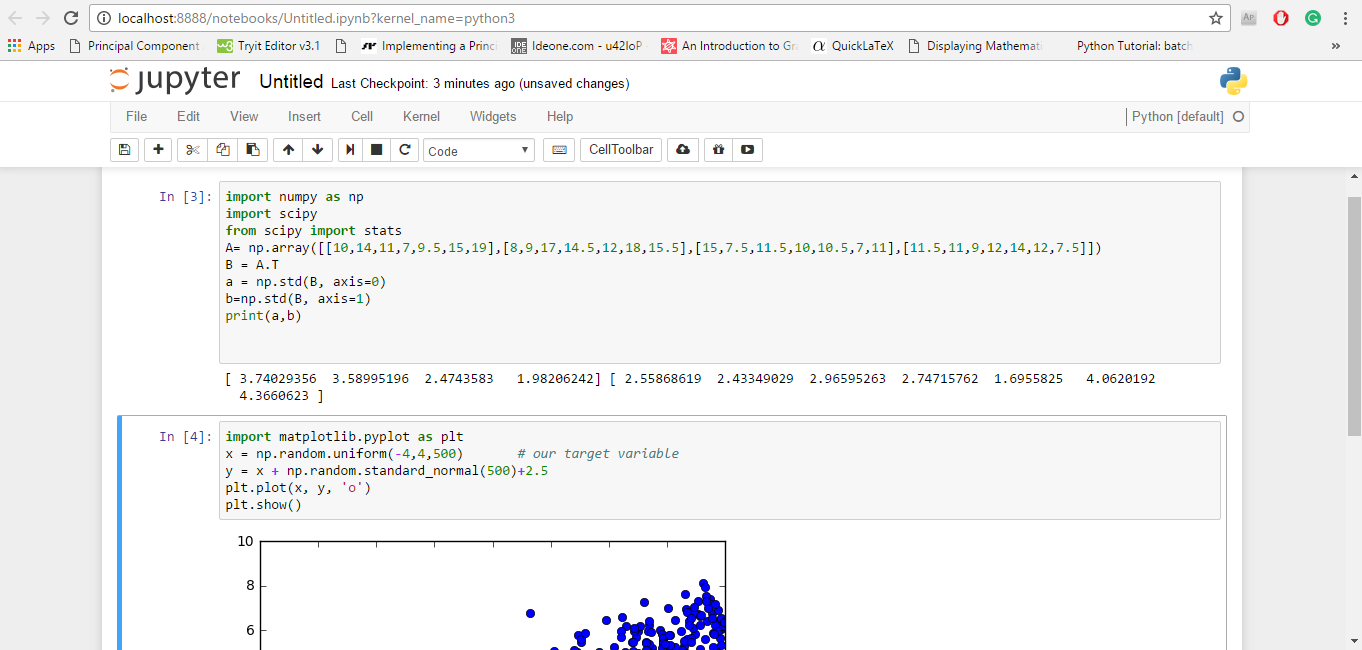

JuPyter/IPython Notebook

Project Jupyter started as a derivative of IPython in 2014 to support scientific computing and interactive data science across all programming languages.

IPython Notebook says that “IPython 3.x was the last monolithic release of IPython. As of IPython 4.0, the language-agnostic parts of the project: the notebook format, message protocol, qtconsole, notebook web application, etc. have moved to new projects under the name Jupyter. IPython itself is focused on interactive Python, part of which is providing a Python kernel for Jupyter.”

Jupyter constitutes of three components - notebook web applications, kernels, and notebook documents.

Some of its key features are the following:- It is open source.

- It can support up to 40 languages, and it includes languages popular for data science such as Python, R, Scala, Julia, etc.

- It allows one to create and share the documents with equations, visualization and most importantly live codes.

- There are interactive widgets from which code can produce outputs such as videos, images, and LaTeX. Not only this, interactive widgets can be used to visualize and manipulate data in real-time.

- It has got Big Data integration where one can take advantage of Big Data tools, such as Apache Spark, from Scala, Python, and R. One can explore the same data with libraries such as pandas, scikit-learn, ggplot2, dplyr, etc.

- The Markdown markup language can provide commentary for the code, that is, one can save logic and thought process inside the notebook and not in the comments section as in Python.

Some of the uses of Jupyter notebook includes data cleaning, data transformation, statistical modelling, and machine learning.

Some of the features specific to machine learning are that it has been integrated with libraries like matplotlib, NumPy, and Pandas. Another major feature of the Jupyter notebook is that it can display plots that are the output of running code cells.

It is currently used by popular companies such as Google, Microsoft, IBM, etc. and educational institutions such as UC Berkeley and Michigan State University.

Free download: Click here.

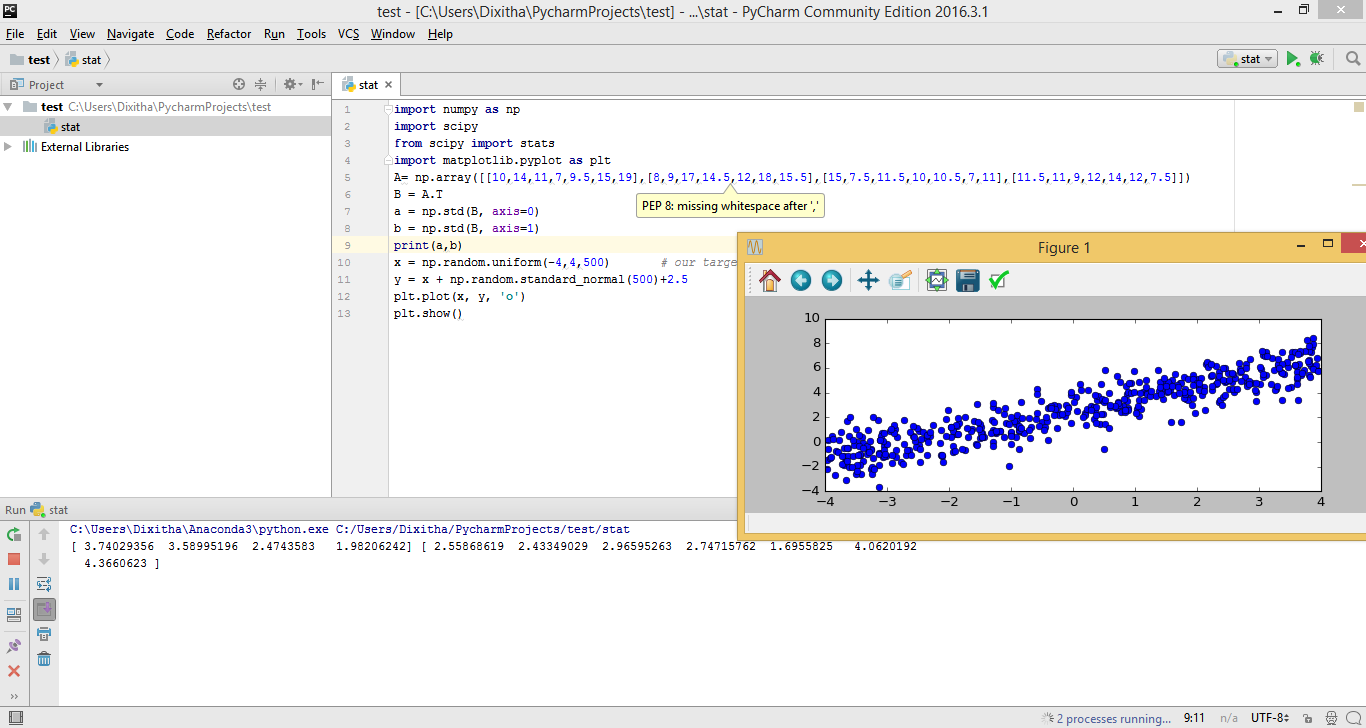

PyCharm

PyCharm is a Python IDE developed by JetBrains, a software company based in Prague, Czech Republic. Its beta version was released in July 2010 and version 1.0 came three months later in October 2010.

PyCharm is a fully featured, professional Python IDE that comes in two versions: PyCharm Community Edition, which is free, and a much more advanced PyCharm Professional Edition, which comes as a 30-day free trial.

The fact that PyCharm is used by many big companies such as HP, Pinterest, Twitter, Symantec, Groupon, etc. proves its popularity.

Some of its key features are the following:- It includes creative code completion for classes, objects and keywords, auto-indentation and code formatting, and customizable code snippets and formats.

- It shows on-the-fly error highlighting (displays error as you type). It also contains PEP-8 for Python that helps in writing neat codes that are easy to support for other languages.

- It has features for serving fast and safe refactoring.

- It includes a debugger for Python and JavaScript with a graphical UI. One can create and run tests with a GUI-based test runner and coding assistance.

- It has a quick documentation/definition view where one can see the documentation or object definition in the place without losing the context. Also, the documentation provided by JetBrains (here) is comprehensive, with video tutorials.

The most important feature that makes it fit for machine learning is its support for libraries such as Scikit-Learn, Matplotlib, NumPy, and Pandas.

There are features like Matplotlib interactive mode which work both in Python and debugger console where one can plot, manage, and explore the graphs in real time.

Also, one can define different environments (Python 2.7; Python 3.5; virtual environments) based on individual projects.

Free download: Click here

Spyder

Spyder stands for Scientific PYthon Development EnviRonment. Spyder’s original author is Pierre Raybaut, and it was officially released on October 18, 2009. Spyder is written in Python.

- It is open source.

- Its editor supports code introspection/analysis features, code completion, horizontal and vertical splitting, and goto definition.

- It comes with Python and IPython consoles workspace, and it supports debugging runtime, i.e., as soon as you type it will display the errors.

- It has got a documentation viewer where it shows documentation related to classes or functions called either in editor or console.

- It also supports variable explorer where one can explore and edit the variables that are created during the execution of file from a graphic user interface like Numpy array ones.

It integrates NumPy, Scipy, Matplotlib, and other scientific libraries. Spyder is best when used as an interactive console for building and testing numeric and scientific applications and scripts built on libraries such as NumPy, SciPy, and Matplotlib.

Apart from this, it is a simple and light-weight software which is easy to install and has very detailed documentation.

Rodeo

Rodeo is a Python IDE that's built expressly for doing machine learning and data science in Python. It was developed by Yhat. It uses IPython kernel.

- It makes it easy to explore, compare, and interact with data frames and plots.

- The Rodeo text editor comes with auto-completion, syntax highlighting, and built-in IPython support so that writing code gets faster.

- Rodeo comes integrated with Python tutorials. It also includes cheat sheets for quick material reference.

It is useful for the researchers and scientists who are used to working in R and RStudio IDE.

It has many features similar to Spyder, but it lacks many features such as code analysis, PEP 8, etc. Maybe Rodeo will come up with new features in future as it is fairly new.

Free download: Click here.

Geany

Geany is a Python IDE originally written by Enrico Tröger in C and C++. It was initially released on October 19, 2005. It is a small and lightweight IDE (14 MB for windows) which is as capable as any other IDE.

- Its editor supports syntax highlighting and line numbering.

- It also comes with features like auto-completion, auto closing of braces, auto closing of HTML, and XML tags.

- It includes code folding and code navigation.

- One can build systems to compile and execute the code with the help of external codes.

Free download: Click here.

For those who are familiar with RStudio and want to look for options in Python, RStudio has included editor support for Python, XML, YAML, SQL, and shell scripts in edition 0.98.932, which was released on June 18 2014, although there is a little support for Python as compared to R.

This is not an exhaustive list. There are other Python IDEs such as PyDev, Eric, Wing, etc. To know about more them, you can go to the Python wiki page here.